“Just buy a tool with a cord!” Like it was THAT easy! Battery-powered devices can be a convenience or necessity too. It’s easier to operate a screwdriver or drill that runs on battery, but if you are in the middle of nowhere and no power outlet in sight, a blackout happened, the electrician will only come after you, or you are the electrician – you would be dead without battery-powered tools! Also, if you make an off-grid solar system, a huge battery pack is a must. So, how do we measure batteries?

Amp-hours

The numbers stated on every battery! (maybe hidden in a datasheet) Big batteries like powerwalls, power tool and car batteries are usually measured in amp-hour (Ah), smaller ones used in portable devices use milli-amp-hours (mAh). The quick math: bigger number operates longer. But what does it mean? Current * how long, roughly: 1 Ah battery can provide 1 A for 1 hour, 10 A for 0.1 hour or 0.1 A for 10 hours. But life isn’t that easy!

Batteries have electrolytes working inside, and while capacitors can easily charge and discharge at fascinating speeds (because they are “only” electrostatic devices), chemicals have to do chemistry, and it depends on a lot of variables, like the electrode size, temperature, and the connected load!

Batteries usually have disproportionate losses with higher load, so a fictional 1 Ah battery’s calculation would look like this:

- 100 hours with 0.01 A load (this is how it’s rated)

- 9.5 hours with 0.1 A (slightly less, than 10)

- 0.8 hour with 1 A (20% less!)

- 0.05 hour with 10 A (just terrible)

C rating

A quick detour, but we must talk about C rating. Multiply your Ah rating with C rating: that’s how much amps you can easily get from that battery! Let’s take some examples from datasheets and product descriptions:

- Rated Capacity (0.2C): 1050mAh, High Rate Discharge Capacity (1C): 1050 mA * 1C = 1 A

- 800mAh 3S1P 30C Lipo: 0.8 * 30 = 24 A

- Nano-tech 460mAh 3S1P 25-40C: 0.46 Ah * 25 = 11.5 A – 18.4 A

C rating tells (almost) nothing about losses at higher loads or precise discharge curve, it only tells you how big is the maximum allowed constant current. So if our fictional battery would be the first real example, we weren’t allowed to ask for 10 A!

But let’s check the “25-40C”: those are 2 numbers! The lower C rating is the constant, nominal load, this can be provided as long as the battery has charge left. The second, bigger (if present) tells us 2 things: the peak current, usually for a few seconds, and that this is a high-power battery! Higher C ratings mean more expensive (and better) batteries.

A quick calculation using amp-hours

Let’s assume a solar-powered, battery-backup weather station in the garden. The average load of the system is 100 mA, and buy a lithium-ion battery that will last for 24 hours (because rainy days). So, 100 mA * 24 hours = 2,4 Ah. The nearest genuine (because cheap fake ones exist) battery is 2.5 Ah, so this would be enough, right?

The first rainy day comes, and the station goes offline after 18 hours. What went wrong?

Watt-hours

Amp-hours doesn’t care about the voltage, meanwhile Watt (the performance) = voltage * amps! Amp-hours are great for single cells, but we can have bigger battery packs with multiple cells. Do you remember the example: “800mAh 3S1P 30C Lipo”? 3S1P means that 3 cells are connected in series! So at the end, our measly 800 mAh battery pack has almost the same amount of ENERGY as our single cell 2.5 Ah battery!

Watt-hours are calculated by multiplying the battery cell’s or pack’s nominal voltage with the Ah rating. A lithium-ion battery’s nominal voltage is around 3.7V, so our cell’s energy capacity is 3.7 V * 2.5 Ah = 9.25 Wh. The small battery pack is 3 * 3.7 V * 0.8 Ah = 8.88 Wh

So, if our weather station is powered by 5 V, and asks for 100 mA, its load is 0.5 W. 9.25 Wh divided by the 0.5 W load: 18.5 hours, and that’s not 24!

Something is still not clear?

Join our Discord server or Facebook group to ask questions!

Why don’t we only use watt-hours?

While most people find the watt-hour calculation easier, battery pack builders and single-cell users find the amp-hour rating useful. Battery packs must have matching cell capacity, but the use of single lithium cells have a tricky reason.

Converting voltages

2 main types of power converters are used in power supplies for microelectronics: the high-efficiency switching power supply and the so called “LDO”-s (or voltage regulators).

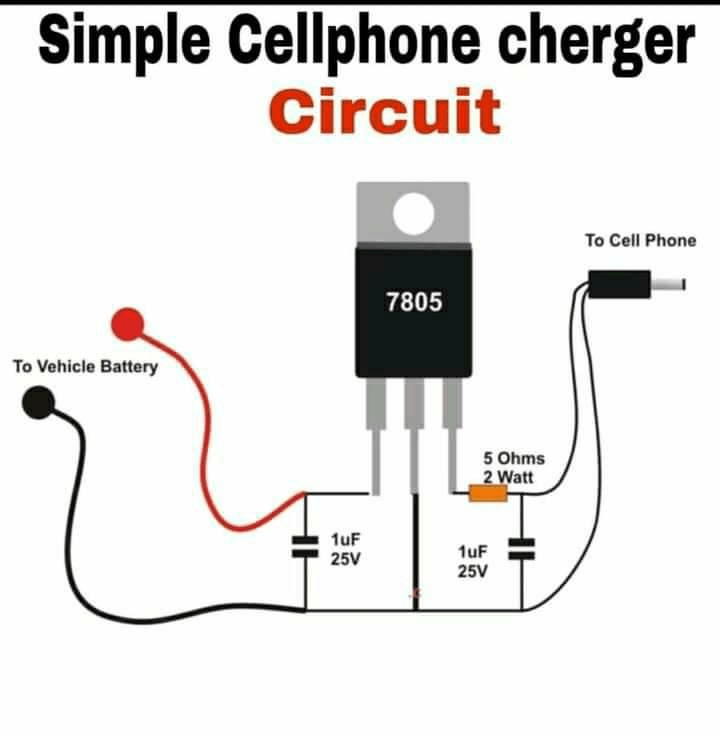

If switching power supplies have great efficiency, LDOs (stands for Low Drop-Out) – you guessed right – usually have terrible efficiency. These devices “regulate” the output voltage by dissipating (converting to heat) the voltage difference between the input and output. To demonstrate, make a cheap car phone charger, which provides 5V, 1A.

“Top Electronic Project Video Tutorials

“.

“.Let’s assume 12V for the source voltage in a car (it can go up to 13.6-13.8 V), 1 A load (because LDO input and output current is the same). The output is 5W (5V * 1A), and the losses are (12V-5V) * 1A = 7W. Just terrible. Switching power supplies easily achieve 80% efficiency, and can go up to 90% with minimal effort, meaning a switching power supply would only produce 0.5-1W loss. That’s a HUGE difference.

Still, LDOs are widely used, even in portable applications, where efficiency would be key and a bigger battery means a bulky device and customers love small, light stuff in their pockets. The reason: low-power operation and idle load.

Switching power supplies have to be smart, and are more complicated. They have a significant power consumption, even without a load! This becomes negligible at normal operation or higher loads (other losses can be much bigger), but if the device is a low-power state, it barely needs any power, and the switching power supply becomes a burden!

Source: ATmega 328p datasheet

The example is from a pretty dated microcontroller, but the proportions will be also huge if not bigger for newer devices: a thousandfold difference between operating and “sleeping” power consumption! Switching power supplies just can’t operate at high efficiency for such a wide range, but neither LDOs – we just cut the idle load.

Calculating time with mAh

Do you remember the weather station’s 100 mA average load? It’s a common technique to put the microcontroller (the brain of the gadgets) to sleep mode, and wake periodically. They barely consume power while being on standby, and when it’s time, they do the work quickly, then go back to sleep. Our example may need 1 A for 0.1 second and sleep for 0.9 second! It’s not economical to keep a switching power supply running, when it’s not really needed for 90-99.9% of the time! (depending on the application)

So we just accept the inefficiencies during the short operating time window to avoid high idle consumption in many cases. And since LDOs only affect the voltage, the calculation is plain and simple: measure or calculate the average load, then just divide the battery capacity with it!

In our example, 2500 mAh battery with 100 mA average load would last about 25 hours. But beware:

- this only works with an LDO

- LDOs need some difference between input and output voltage

- used in 3.3V systems, lithium batteries would discharge down to 2.8 V to provide the rated capacity, so it’s way less capacity to calculate